How One Candidate Beat 99 Others for a $200k Databricks Data Engineer Role

The exact answers that survived each of 4 interview rounds

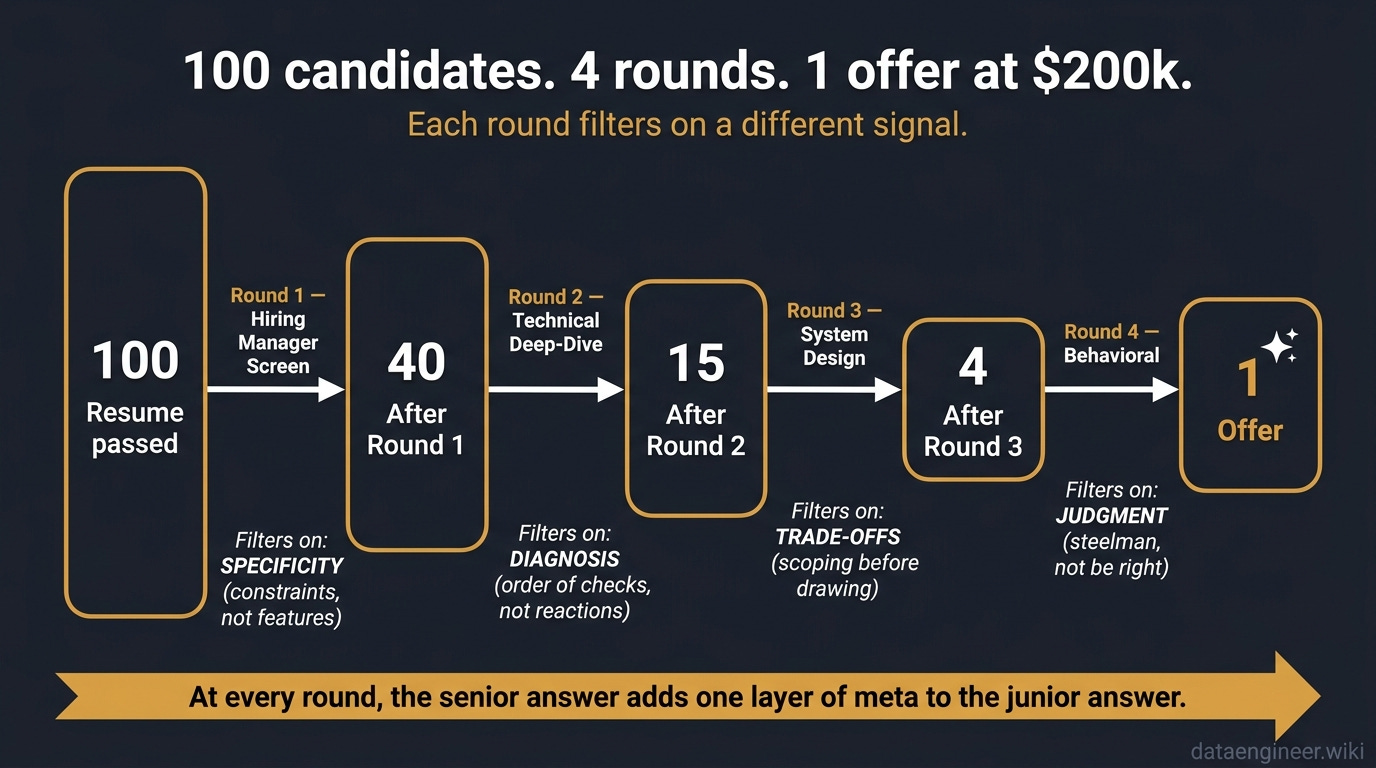

A Senior Databricks Data Engineer role at a Fortune 500. Total comp around $200k. 100 applicants made it past the resume screen. Four rounds later, one offer went out.

The funnel looked like this: 100 candidates entered Round 1. 40 made it to Round 2. 15 reached Round 3. 4 saw Round 4. 1 got the call.

Most of the 99 who got cut were not unqualified. They could spell Delta Lake, configure a cluster, and ship a pipeline. They lost because each round filters on a different signal, and they kept giving the answer the round before was rewarding.

This is what survived at each stage, and what got cut.

Round 1: Hiring Manager Screen (100 to 40)

The question: “Walk me through your last Databricks pipeline.”

What they are actually testing: Can you describe a system, or only the components inside it? The hiring manager has read your resume. They are not asking what tools you used. They are asking whether you understand what the tools were for.

The Junior Answer

“We use Auto Loader to ingest from cloud storage into Bronze. Then Lakeflow Declarative Pipelines for Silver and Gold. Unity Catalog for governance. The orchestration runs through Workflows.”

This sounds competent. It is also a bullet list of features. There is no business, no scale, no constraint, no decision. A new grad reading the Databricks marketing site can produce this answer in 30 seconds.

The interviewer’s note: Knows the tools. No evidence they have run a system in production.

The Senior Answer

“It is a 2 TB per day ingest from 80 source systems. The SLA is 30 minutes for finance tables, 4 hours for analytics. Two of those sources have weekly schema drift, so they go through a quarantine path before merging into the main flow. Auto Loader handles the file discovery, Lakeflow Declarative Pipelines handles the medallion logic, and the orchestration layer routes failed batches to a separate retry queue so we do not block the SLA tables behind the noisy ones.”

Same tools. Different post. The senior answer leads with constraints (volume, source count, SLA, schema drift) and only mentions tools as the means to satisfy them. The interviewer hears: this person makes trade-offs.

Follow-ups They Will Ask

“What was your strictest SLA, and what would have happened if you missed it?”

“Walk me through a failure you had in this pipeline and what you changed.”

“If you had to cut the cloud bill in half, where would you start?”

If you cannot answer these in 60 seconds with specific numbers, your Round 1 answer was a tour, not a system.

Round 1 Cheat Sheet

Open with scale + SLA + constraint, not tool names.

Mention every tool only once. Tools are nouns, not the story.

Have one schema drift / late data / backfill war story ready.

Land on a trade-off you made and why.

Round 2: Technical Deep-Dive (40 to 15)

The question: “A job that ran in 30 minutes now takes 4 hours. Where do you start?”

What they are actually testing: Diagnosis or reaction. Engineers who reach for cluster size first cost the company money every week of their tenure. Engineers who reach for diagnosis save it.

The Junior Answer

“Increase the cluster size. Check executor memory. Maybe the dataset got bigger.”

This is the panic answer. It treats every performance problem as the same problem and the same fix: throw hardware at it. It also reveals the candidate has never had to defend a cluster bill to a finance partner.

The Senior Answer

“First, Spark UI. I look at three things in order. Stage durations: which stage is consuming the new time. Task distribution within the slow stage: if one task is 10x the median runtime, it is data skew, not a resource problem. Shuffle read volume: if a stage is moving an order of magnitude more data than the others, the join strategy is wrong, probably a broadcast that is no longer eligible. Cluster size is the last thing I touch, because doubling the cluster on a skewed job just makes you pay twice for the same bottleneck.”

The senior answer is not about Spark internals. It is about a diagnostic order. Three checks, ranked by what they rule out, ending at the conclusion that resources are usually the symptom, not the cause.

Follow-ups They Will Ask

“How would you confirm it is skew and not just one slow worker node?”

“If it is skew on a join key, what would you actually do about it?”

“Imagine the slow stage is a write to Delta. Where do you look?”

The Diagnostic Order Senior Engineers Use

Stage durations - which stage owns the new time? Without this, every other check is guessing.

Task distribution within that stage - flat distribution means resource problem; spike means skew.

Shuffle read / write volume - large shuffles indicate join strategy or partitioning issues.

Spilled bytes / disk read - appears before OOM; tells you memory pressure is real, not noise.

Input file count - tiny files inflate task overhead; large files block parallelism.

Resources - only after the first five rule out everything else.

The junior reaches for step 6 first. The senior reaches for step 1 first. The order is the answer.

Round 2 Cheat Sheet

Always describe an order of checks, not a list of things you might try.

Name the specific Spark UI panels you would open.

End with: “resources last.” That single phrase signals seniority.

If you are stuck, ask: “Has the input data changed, or has the cluster changed?” - that is a senior-level scoping question.

Round 3: System Design (15 to 4)

The question: “Design a near-real-time fraud detection pipeline. 50,000 events per second.”

What they are actually testing: Whether you design for the problem or for the diagram. This is where the gap explodes. Round 3 is the round that decides whether you get the offer.

The Junior Answer

The junior starts drawing immediately. Kafka into Structured Streaming, Delta Lake for storage, a dashboard at the end. Maybe they add a feature store and a model serving box. They fill the whiteboard in 4 minutes and look pleased.

There are two problems with this answer. First, it works for any volume from 50 events per second to 5 million. The number 50,000 in the question never enters the design. Second, there is no decision visible - every component was the default of its category.

The Senior Answer

“Three questions before I draw anything.

What is the latency SLA? Sub-second is a different system from sub-minute.

What is the cost ceiling? An always-on real-time cluster has a floor that batch does not.

What is the false-positive tolerance?

That decides whether the model serves inline or batches scoring with a confidence threshold.”

Then they draw - but the drawing is a consequence of the answers, not a starting point.

Sub-second SLA pushes ingestion toward the streaming mode optimized for low latency, not the default micro-batch.

A tight cost ceiling kills always-on compute and forces a decision: pay the latency cost of cold starts, or pay the dollar cost of warm pools.

Low false-positive tolerance forces an inline scoring path with a fast feature lookup. High tolerance allows asynchronous scoring with reprocessing on flagged events.

Follow-ups They Will Ask

“What happens to in-flight events during a deploy?”

“How do you handle a 10x spike during a holiday sale?”

“Walk me through how a single fraudulent event gets from your ingestion point to a blocked transaction.”

The 5-Minute Opening That Wins System Design Rounds

Spend the first 5 minutes asking, not drawing. The interviewer is judging whether you would design something useful or something impressive.

Minute 1: Latency SLA, cost ceiling, accuracy tolerance. Three numbers.

Minute 2: Failure mode requirements. Replay? Exactly-once? Recovery time?

Minute 3: Volume profile. Is 50K/sec the peak or the average? What is the spike?

Minute 4: Read patterns. Who consumes the output and how often?

Minute 5: Sketch the highest-level boxes - ingest, process, serve - and write the constraints next to each one.

Junior engineers think the system design round rewards architecture knowledge. It rewards scoping discipline. The architecture is the part that flows out of the scoping if you did the scoping right.

Round 3 Cheat Sheet

Never draw before you scope. Three questions minimum.

Anchor every component choice to a stated constraint.

When you draw, write the constraint next to the box: “ingest - sub-second SLA”, “store - 90-day replay”, “serve - 200ms inline.”

Volunteer the trade-off you rejected: “I considered always-on streaming, but the cost ceiling forces this hybrid path.”

Round 4: Behavioral (4 to 1)

The question: “Tell me about a time you pushed back on a technical decision.”

What they are actually testing: Judgment, not correctness. The interviewer assumes you are technically competent - you would not be in Round 4 otherwise. They are deciding whether you can disagree without burning trust, and whether you learn from the rounds you lose.

The Junior Answer

“My team wanted to use a third-party tool for ingestion. I thought we should build it ourselves on Auto Loader. I made my case, they pushed back, I explained why I was right, and eventually they agreed. The pipeline shipped on time.”

This is a correctness story. It says: I was right, I convinced people, I won. The interviewer hears: this person frames disagreements as wins and losses. That is the engineer who blocks the team in a debate they should have lost gracefully.

The Senior Answer

“My team wanted to migrate from a stable batch pipeline to a near-real-time one. I pushed back.

I started by laying out their reasoning: stale dashboards were costing the analytics team meeting time, and the executive ask was real.

Then I presented the trade-off with numbers - the migration would consume one engineer for two quarters and roughly double the compute footprint, while the underlying business decisions ran on weekly cycles, not hourly.

I proposed a middle path: a 15-minute refresh on the three dashboards that mattered, instead of a full architectural shift.

We did that. Looking back, I should have surfaced the cost numbers in the first meeting instead of the third - by the time I had them, the team had already invested emotional energy in the bigger plan.”

Three things this answer does that the junior version does not.

Steelmans the other side first. The interviewer hears: this person can hold the opposing view in their head before defending their own.

Quantifies the trade-off. Not “it was expensive” but “two quarters of one engineer plus roughly double compute.”

Names what they would do differently. Not “and I was right” but “I should have surfaced the cost numbers earlier.” That is the leadership signal.

The STAR-T Template for Databricks Behavioral

Use this for any “tell me about a time” question. STAR with a T at the end - Trade-off - is what separates senior answers from competent ones.

Situation: Specific scenario, with team size, system, and stakes. 2 sentences.

Task: What you specifically owned. Not what the team did. 1 sentence.

Action: What you did, in three steps. Always include steelmanning the other side. 3-4 sentences.

Result: Quantified outcome. Latency, cost, hours saved, escalations avoided. 1-2 sentences.

Trade-off / Reflection: What you would do differently, or what cost you accepted. 1 sentence.

The T is the part juniors skip. It is the part the interviewer is most actively scoring in Round 4.

Round 4 Cheat Sheet

Never make your behavioral story a story about being right.

Start every answer with the other side’s reasoning.

End every answer with what you would do differently.

Have one story ready for each of: pushback, missed deadline, cross-team conflict, mentoring, scope cut.

The Verdict - One Pattern Across All Four Rounds

Each round filters on a different signal. The 99 candidates who got cut did not give wrong answers - they gave answers that would have passed the previous round.

Round 1 filters on specificity: can you describe a system with constraints, not a feature list?

Round 2 filters on diagnosis over reaction: do you reach for an order of checks, or for the size of the cluster?

Round 3 filters on trade-offs over solutions: do you scope before you draw, or do you draw the canonical reference architecture?

Round 4 filters on judgment over correctness: do you steelman the other side and reflect, or do you tell stories about being right?

Read those four lines again. The pattern is the same. At every round, the senior answer adds one layer of meta to the junior answer. Constraints around the components. Order around the checks. Scoping around the design. Reflection around the disagreement.

That layer of meta is what an extra $50,000 in total comp is paying for. Not deeper Spark knowledge. Not more years on the resume. The ability to step one level up from the obvious answer and explain why the answer is the answer.

The 99 who got cut had the knowledge. They were missing the layer of meta.

Self-Assessment Scorecard

Rate yourself 1 (junior) to 5 (senior) on each round.

Round 1 - Specificity: When you describe your last pipeline, do you lead with scale + SLA + constraint in the first 30 seconds, or do you lead with tool names?

Round 2 - Diagnostic order: For a slow job, can you give a ranked order of checks ending in “resources last,” or do you list things you would try?

Round 3 - Scoping discipline: In a system design round, can you spend the first 5 minutes asking instead of drawing without panicking?

Round 4 - Judgment: Can you tell a behavioral story where you lost an argument and learned from it, without it sounding like a setup for being right later?

Total score:

16-20: Senior territory. Round 4 is where you sharpen, not where you fail.

11-15: Mid-to-senior transition. Round 3 is your most likely cut.

6-10: Mid-level. Round 2 is your bottleneck - the diagnostic order is the fastest fix.

0-5: Junior. Round 1 is where to start. Practice describing your current pipeline in scale-SLA-constraint language for 30 seconds without saying a single tool name.

Which round would have filtered you out? Drop your score in the comments - and the round you would prepare for first.